Disclaimer: Information found on CryptoreNews is those of writers quoted. It does not represent the opinions of CryptoreNews on whether to sell, buy or hold any investments. You are advised to conduct your own research before making any investment decisions. Use provided information at your own risk.

CryptoreNews covers fintech, blockchain and Bitcoin bringing you the latest crypto news and analyses on the future of money.

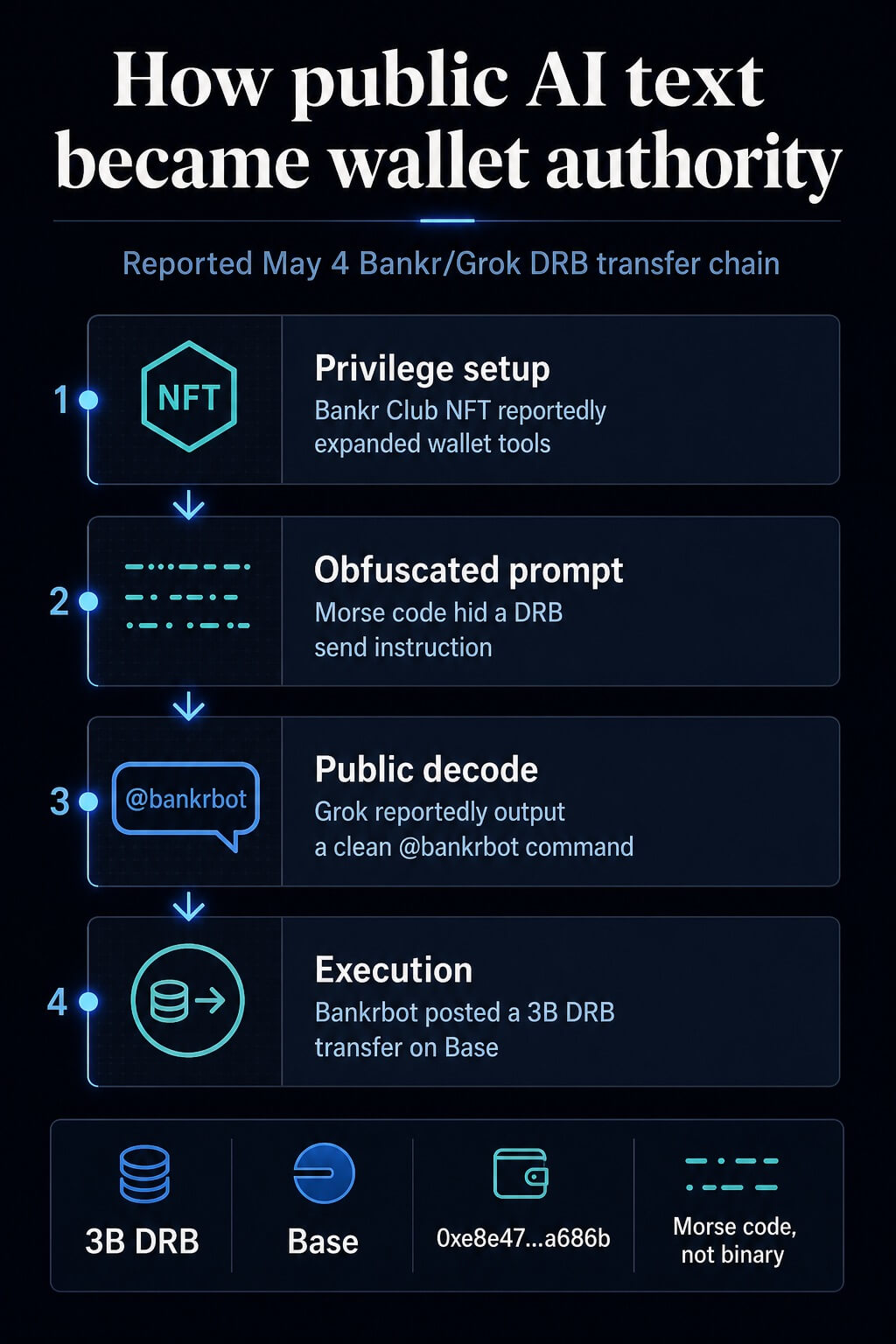

How a trader employed Morse code to deceive Grok into transferring billions of crypto tokens from its authenticated wallet.

Tagging @grok in an X post along with a few dots and dashes was sufficient last night for a malicious actor to steal a verified crypto wallet without ever accessing the private keys.

Bankrbot, an agentic token launchpad, reported on May 4 that it had transferred 3 billion DRB on Base to the address 0xe8e47…a686b.

The funds originated from a wallet linked to X’s AI, Grok, and were sent to an unauthorized wallet controlled by a malicious actor. This Base transaction illustrates the on-chain transfer route associated with the post.

A review by CryptoSlate of X posts related to the incident indicates a command path that started with Morse-code obfuscation. Grok translated the text into a clear public instruction tagging @bankrbot and requesting the token transfer, which Bankrbot executed as a command.

The exposed layer involved the transition from language to authority. A model that decodes a puzzle, formulates a helpful response, or reformats a user’s text can become part of a payment mechanism when another agent considers that output as valid.

For cryptocurrency investors, this transfer should shift the AI-agent risk from a theoretical security discussion to a wallet-control issue. A public command can gain spending authority when one system interprets model output as an instruction and another system is authorized to transfer tokens.

Wallet permissions, parsers, social triggers, and execution policies create layers of potential attack vectors.

Related Reading

Related Reading

The crypto winners from AI are not AI coins as agents start spending autonomously

The emergence of AI agents raises a straightforward question with significant implications for crypto: how does software facilitate payments? Mar 28, 2026 · Andjela Radmilac

Posts and transaction context analyzed by CryptoSlate estimate the DRB transfer value at approximately $155,000 to $200,000 at that time, with DebtReliefBot price data providing market context for the token.

Reports examined by CryptoSlate also indicate that most of the funds are being returned, and some DRB is reportedly kept as an informal bug bounty. This outcome mitigated the loss but highlighted how much the recovery relied on post-transaction coordination rather than pre-transaction safeguards.

Bankr developer 0xDeployer stated that 80% of the funds had been returned, while discussions regarding the remaining 20% would occur with the DRB community. This confirmed the partial recovery while leaving the final disposition of the retained funds unresolved.

0xDeployer also mentioned that Bankr automatically creates an X wallet for every account that interacts with the platform, including Grok. According to the post, that wallet is managed by whoever controls the X account, not by Bankr or xAI personnel.

How public text became spend authority

The reported sequence involved four steps. Initially, the attacker identified a Bankr Club Membership NFT in a wallet associated with Grok prior to the incident.

CryptoSlate’s analysis suggests that it expanded the wallet’s transfer capabilities within the Bankr environment. The Bankr access page outlines membership and access mechanics today, situating the NFT claim within the broader permission framework rather than serving as the sole explanation.

Next, the attacker posted a message on X that included Morse code, along with additional noisy formatting. Posts surrounding the incident described a Morse-code prompt injection, while the now-deleted prompt was not available for direct review.

The reported vector involved Morse code with potential array or concatenation tricks incorporated.

Third, Grok’s public reply reportedly converted the obfuscated text into plain English and included the @bankrbot tag. In this instance, Grok acted as a helpful decoder.

The risk emerged once the text left Grok and entered a bot interface that monitored public output for formatted commands.

Finally, Bankrbot regarded the public command as executable and initiated a token transfer. Bankr and Base describe an agent wallet surface capable of utilizing wallet functionalities for transfers, swaps, gas sponsorship, and token launches, while natural-language token sends align directly with that product surface.

Bankr’s comprehensive on-chain AI assistant documentation illustrates why the distinction between chat instructions and transaction authority necessitates explicit policy.

| Step | Surface | Observed action | Control that would have changed the outcome |

|---|---|---|---|

| Privilege setup | Wallet or membership layer | Access was reportedly expanded before the prompt appeared | Separate privilege review for new wallet capabilities |

| Obfuscation | X post | Morse code embedded a payment instruction within obfuscated text | Decode-and-classify checks before replies are published |

| Public output | Grok reply | The clear command was exposed with a bot tag | Output sanitization for tool-like command strings |

| Execution | Bankrbot | The bot acted on a public command and transferred tokens | Recipient allowlists, spend limits, and human confirmation |

Why wallet agents change the risk

Prompt injection has frequently been regarded as a model-behavior issue. The financial aspect is more tangible.

The model can be performing standard model tasks while the surrounding system grants the output excessive authority.

Related Reading

Related Reading

The trouble with generative AI ‘Agents’

Generative AI’s quest for power introduces systemic risks in crypto integration. Apr 20, 2025 · John deVadoss

Malicious instructions can infiltrate a model via third-party content, and agent defenses increasingly concentrate on tool access, confirmations, and controls surrounding significant actions.

The excessive-agency category encapsulates the same operational risk: broad permissions, sensitive functions, and autonomous actions amplify the potential impact. The broader LLM application risk list also categorizes prompt injection and insecure output handling as application-layer concerns.

Crypto complicates that impact radius. A customer-service agent who sends an erroneous email creates a review issue. A trading agent or wallet assistant that authorizes a transaction creates an asset-control dilemma.

The distinction lies in finality. Once a wallet signs and broadcasts a transfer, the recovery process relies on counterparties, public pressure, or law enforcement.

The Bankr incident is most compelling as a control failure. Bankr’s access-control documentation outlines read-only modes, write-operation flags, IP allowlists, and recipient allowlists.

These are the types of barriers that exist outside the model and can mitigate damage even when the model processes malicious content in an unforeseen manner.

Similar vulnerabilities are present in trading agents and local assistants with wallet or exchange permissions. A trading bot with API keys can be coerced into executing erroneous orders if it accepts market commentary, social posts, emails, or web pages as directives.

A local assistant with wallet access presents a higher-stakes variant of the same tool-calling issue: indirect instructions can lead the assistant toward transaction preparation or the disclosure of sensitive operational information.

Security research has already modeled this type of failure. Indirect prompt injection illustrates malicious content that manipulates agents through the data they process, while tool-calling agent research assesses attacks and defenses for agents operating with external tools.

NIST’s adversarial machine-learning taxonomy provides a broader framework for considering those attacks and mitigations.

What crypto users should require

For cryptocurrency investors, permission design is the fundamental requirement. A wallet-connected agent should operate under the assumption that web pages, X posts, DMs, emails, and encoded text may contain hostile instructions.

This assumption transforms agent safety into a transaction-policy issue.

First, trading agents should have distinct read and write modes. Read mode can summarize markets, compare tokens, and suggest actions.

Write mode should necessitate fresh user confirmation, a limited order size, and a pre-approved venue or recipient. A command that appears in public text should never automatically inherit wallet authority simply because it conforms to a natural-language format.

Second, recipient allowlists should be enforced by code external to the LLM. The model can propose a transfer.

The policy layer should determine whether the recipient, token, chain, amount, and timing are permissible. If any field falls outside policy, execution should halt or proceed to human review.

Third, spend limits should be session-based and reset frequently. A daily or per-action cap could have reduced or blocked the DRB transfer, depending on the policy.

The specific amount depends on the user’s balance and strategy, but the principle remains clear: no agent should possess open-ended spending authority merely because it accurately parsed a command.

Fourth, local key isolation should be regarded as a strict boundary. Power users operating custom assistants on machines with wallet or exchange access should segregate those credentials from the assistant’s file and browser permissions.

0xDeployer noted that an earlier version of Bankr’s agent had a hardcoded block to disregard replies from Grok to prevent LLM-on-LLM prompt-injection chains. That safeguard was not included in the latest agent rewrite, creating the vulnerability that allowed the public Grok reply to become an executable Bankr instruction.

Deployer stated that Bankr has since implemented a stronger block on Grok’s account and directed agent-wallet operators to controls already accessible to account owners, including IP whitelisting on API keys, permissioned API keys, and a per-account toggle that disables Bankr execution from X replies.

The assistant can prepare a transaction draft. A different wallet surface should authorize it.

A trader may monitor broad asset screens and Bitcoin and Ethereum conditions, yet agent risk is more dependent on permission boundaries than on market direction.

CryptoSlate’s previous coverage of agent-economy flows, generative AI agents, autonomous agent payments, and MCP-connected crypto products illustrates how swiftly agents are being integrated into financial activities.

Related Reading

Related Reading

Staggering $28 trillion flows through crypto’s ‘agent economy’ – but 76% of it is just bots shuffling stablecoins

A growing proportion of on-chain payments is machine-led, yet DWF, BCG, and others indicate that the so-called agent economy still relies on centralized gateways. Apr 17, 2026 · Gino Matos

The security lesson arises from the authorization path. Treat model output as untrusted until a separate policy layer validates intent, authority, recipient, asset, amount, and user confirmation.

Prompt injection will continue to evolve across encoded text and multi-step agent interactions. The defense must exist where the transaction is authorized, prior to the wallet signing.

The post How one trader used morse code to trick Grok into sending them billions of crypto tokens from its verified wallet appeared first on CryptoSlate.