Disclaimer: Information found on CryptoreNews is those of writers quoted. It does not represent the opinions of CryptoreNews on whether to sell, buy or hold any investments. You are advised to conduct your own research before making any investment decisions. Use provided information at your own risk.

CryptoreNews covers fintech, blockchain and Bitcoin bringing you the latest crypto news and analyses on the future of money.

Why Integrating OpenAI with DeFi Falls Short

Key Takeaways:

- Integrating OpenAI into DeFi does not decentralize AI — it merely introduces an additional interface layer.

- Genuine on-chain AI necessitates data attribution, transparent governance, and verifiable actions of agents.

- Initial adoption will concentrate on wallet bots and trading assistants, while more extensive infrastructure integrations are still years away.

There is much discussion surrounding OpenAI and DeFi, but incorporating it into a smart contract does not equate to the establishment of authentic on-chain intelligence. It may appear impressive in a presentation. However, what if crypto AI requires more than just an OpenAI plugin to truly transform decentralized systems?

Ram Kumar, Core Contributor at OpenLedger, elaborated in an interview with Cryptonews on why authentic on-chain AI demands significantly deeper integration, including data attribution and governance of models.

Beyond OpenAI Plugins: The Importance of On-Chain Intelligence

Currently, many crypto AI initiatives promote themselves as “OpenAI + DeFi” integrations by linking external models to smart contracts. However, Ram Kumar informed Cryptonews that this only scratches the surface:

Most ‘AI + DeFi’ projects merely connect external models to smart contracts… In the absence of verifiable data attribution, transparent governance of models, and on-chain coordination of model evolution, these integrations are primarily interface layers.

He emphasizes that even advanced models like OpenAI are entirely dependent on their training data, yet data contributors are seldom acknowledged or rewarded:

These criticisms strike at the heart of the crypto AI hype. Simply integrating an OpenAI model into a smart contract does not decentralize intelligence. It keeps systems dependent on unclear, off-chain processes. Genuine on-chain AI requires data attribution, governance frameworks, and coordination of agents embedded directly within blockchain infrastructure. This vision transforms data from a passive resource into an active, rewarded asset class.

Attribution enables us to assess the impact of each dataset on model behavior, fostering accountability and fairness throughout the entire AI pipeline.

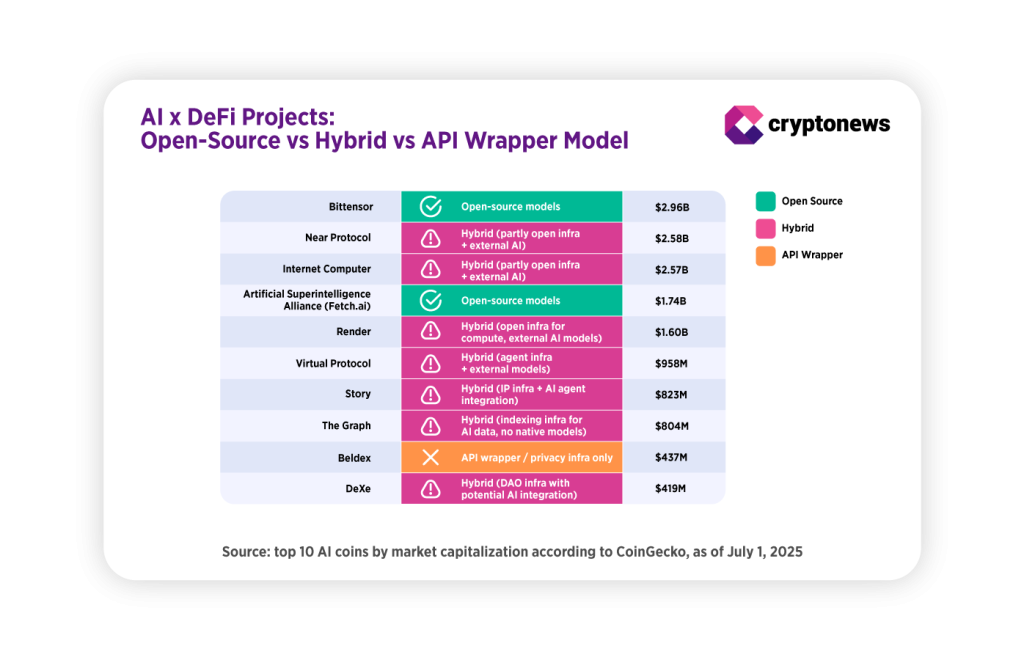

Our model type data, derived from CoinGecko and project white papers, indicates that fully open-source AI remains uncommon even among leading projects, with most employing hybrid structures that incorporate external models like OpenAI while retaining essential components off-chain.

From OpenAI Plugins to Active Agents

AI agents have evolved beyond mere task automation. Kumar envisions them as active participants in DAOs:

AI agents can evolve from passive automation tools to active contributors… proposing ideas, assessing decisions, and negotiating outcomes.

However, he cautions that their actions must be fully auditable and supported by transparent datasets to ensure accountability.

Verifiability will also be crucial for integration across protocols. He added: “It allows these agents to function with clear provenance, where their outputs can be traced back to the data and logic that informed them.”

If AI agents begin to propose or negotiate decisions within DAOs, transparency becomes vital. Without it, DAOs risk introducing non-transparent decision-making that undermines decentralization. In the trust-minimized environment of crypto, agent outputs must remain traceable to mitigate black-box risks within financial or governance protocols.

What Could Go Wrong

Kumar anticipates that deeper adoption will eventually encompass infrastructure-level applications:

Deeper adoption will extend into infrastructure-level use cases, such as validators optimizing resource allocation, protocols employing AI for governance execution, and decentralized training systems coordinating directly on-chain.

Nevertheless, he warns that opaque models making unaccountable decisions present the greatest risk:

Without proper attribution, economic value can unfairly concentrate while contributors remain unrecognized.

Defective AI outputs could lead to unforeseen financial losses in DeFi or trading. Regulators may scrutinize AI systems that cannot demonstrate how decisions are made or the origins of their data. Reputationally, projects that fail to acknowledge contributors or maintain transparent governance risk undermining trust in decentralization itself.

Token utility data indicates that while market caps for AI projects remain substantial, many tokens are restricted to governance or payment functions rather than powering decentralized AI models and computation.

While AI tokens are experiencing growth, Kumar questions their genuine purpose:

Tokens only hold value when they fulfill a fundamental role in coordinating decentralized systems… If a token exists solely for speculative value or restricted access, it contributes little to the advancement of decentralized AI.

Investors may need to consider whether an AI token serves more than just providing pay-to-use access. Sustainable decentralized AI will necessitate incentives for data contributors, compute providers, and model governance to align within a unified ecosystem.

Opportunities: Where Crypto AI Demonstrates Real Utility

Crypto AI agents are already exhibiting potential in areas such as DeFi automation, DAO proposal analysis, on-chain research, and cybersecurity. Kumar highlights early examples:

Morpheus is developing Solidity models for creating smart contracts and dApps. Ambiosis is working on environmental intelligence agents utilizing verified climate data. We are also collaborating with teams focused on Web3 intelligence and cybersecurity agents, all anchored to verifiable data attribution.

Transparency is the common theme. Agents managing funds or governance decisions must remain auditable to prevent systemic risks. Initial adoption will stem from wallet bots and trading assistants, while protocol-level integrations will require more time due to technical and regulatory challenges, according to Kumar:

Initial adoption will likely arise from user-facing tools where immediate value is straightforward to demonstrate, such as trading bots, research assistants, and wallet agents.

The post Why Plugging OpenAI into DeFi Isn’t Enough appeared first on Cryptonews.